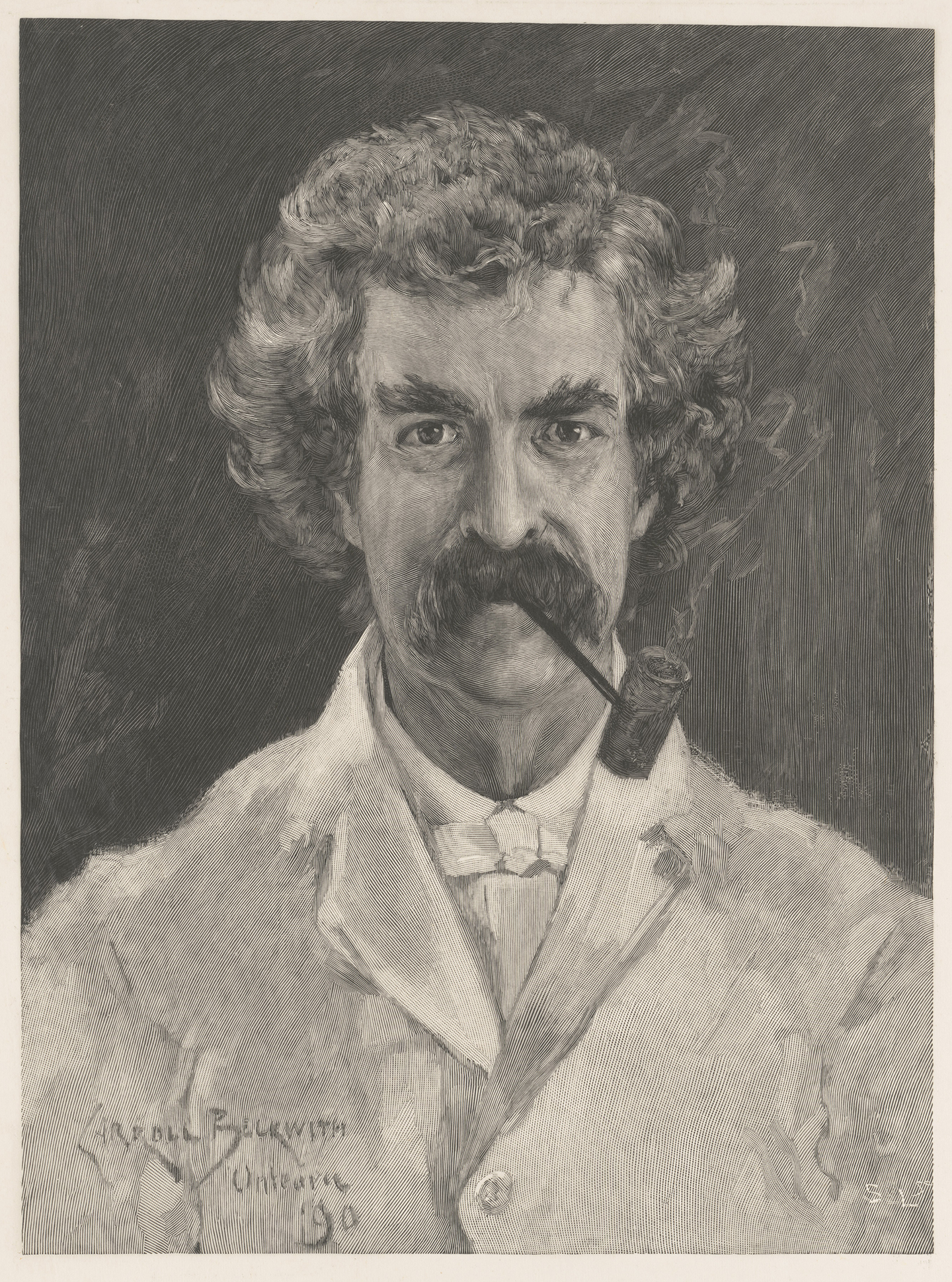

Samuel F.B. Morse (1791–1872) is famous throughout the world for co-inventing the electromagnetic telegraph and developing his namesake Morse code. The great societal impact of his scientific career is coupled with a lesser known but influential artistic legacy. In his lifetime, Morse was a critically acclaimed artist, especially in the genre of portraiture.

Trained in England and France, he was passionate about fostering art appreciation in the United States. After he struggled to receive commissions for his grand ambition of history painting, Morse abandoned his art career for inventing; history was then made. However, his important canvases continue to be admired, and his educational efforts nurtured subsequent generations of American artists.

An Aspiring Artist

Born in Charlestown, Massachusetts, Morse was the son of a notable Congregationalist minister. His father, Jedidiah Morse, is known as the “father of American geography,” having written the first book on the subject.

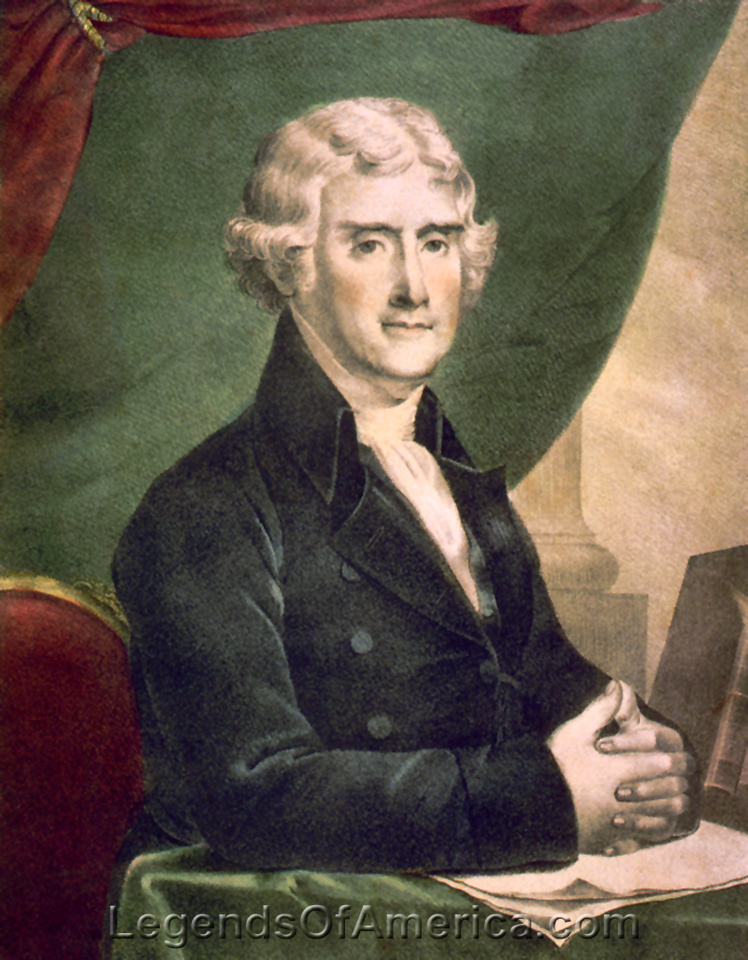

After returning from England in 1815, Morse began to work as a portraitist throughout the United States. He received municipal and private commissions to paint prominent citizens. In 1819, the city of Charleston commissioned a portrait of President James Monroe (1758–1831) to commemorate his visit, as it was the first presidential visit since Washington. A second version, circa 1819, by Morse is part of the White House Collection and displayed in the Blue Room.

Exalting Democracy

A monumental painting, now part of Washington’s National Gallery of Art, is “The House of Representatives.” Painted from 1821 to 1822 and probably reworked the following year, it was the artist’s first grand painting. In it, Morse exalts American democracy. He depicts the stately House of Representatives chamber with its impressive domed ceiling, columns with carved capitals, dramatic crimson-red curtains, and theater-like boxes.

Morse renders the scene with skillful atmospheric lighting emanating from a three-tiered chandelier. Gathered before an evening session are congressmen, staff, Supreme Court justices, press, and, at the far right in the visitors’ gallery, Chief Petalesharo (Pawnee Nation). Petalesharo had visited President Monroe in 1821. Morse spent four months on site in Washington painting more than 60 individuals for the finished picture. The artist was known for his work ethic—he could do as many as four sittings a day.

Morse had high hopes for this painting, believing it would promote his reputation and boost his finances. He toured it in 1823, but it did not excite the public’s interest. At the time, the American people’s taste did not include history painting, which was held in the highest esteem in Europe. Morse continued painting portraits as a means to support himself and his growing family, though he did not value working in the genre.

Marquis de Lafayette

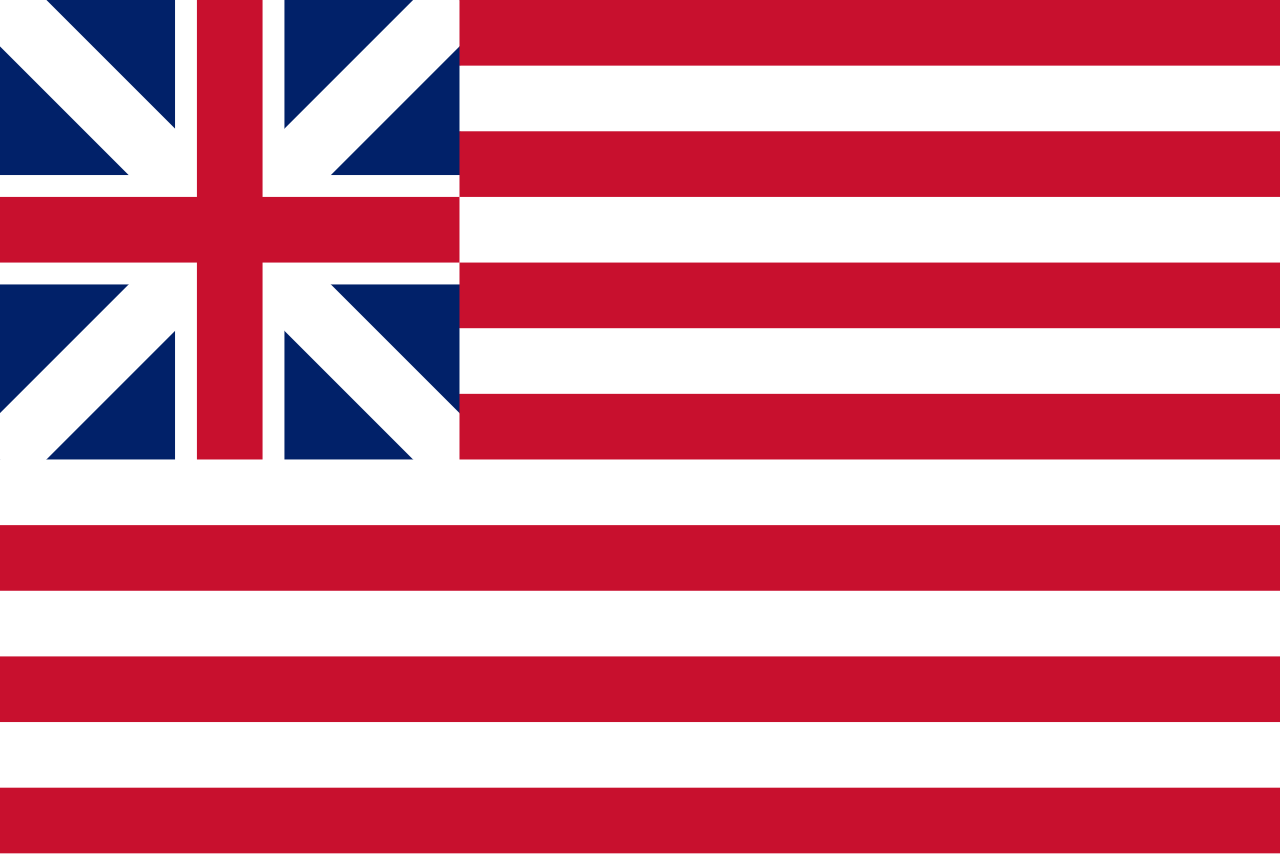

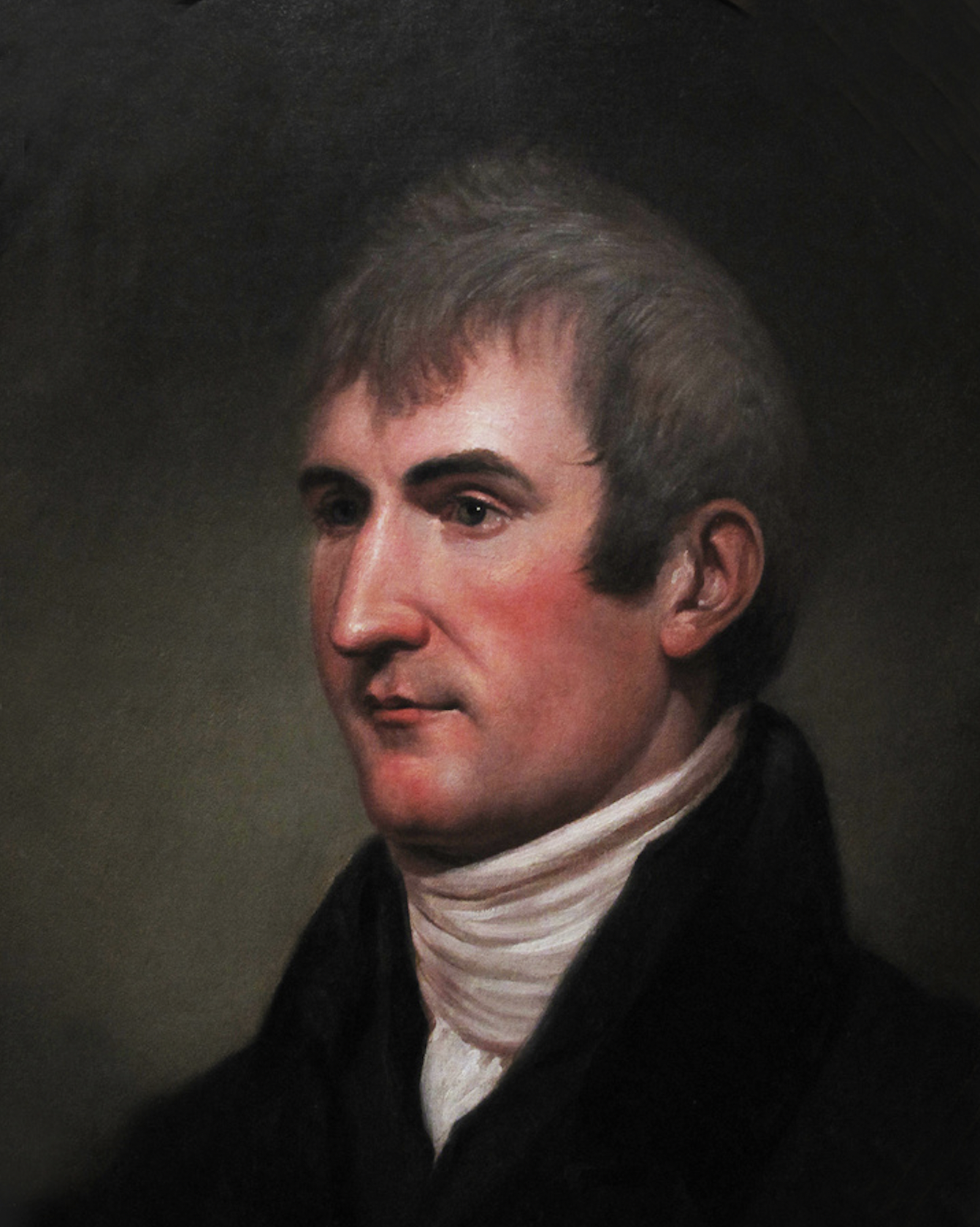

A new opportunity for increasing his prominence came from the city of New York in 1825. Morse was commissioned to create a likeness of the Revolutionary War veteran Marquis de Lafayette (1757–1834). Lafayette was visiting from France to be honored by federal and state governments for his time volunteering in the Continental Army. The full-length result, finished in 1826, is considered among the finest portraits in American art.

Morse painted this national hero with realistic craggy features and positioned him in a symbolic setting. The departed Founding Fathers and friends of Lafayette, Benjamin Franklin and George Washington, are included as sculpted busts against a sunset-colored sky.

While working in Washington on a painted study of the Marquis (bought in 2005 by Bentonville, Arkansas’s Crystal Bridges Museum of American Art for $1.36 million), a personal tragedy befell Morse. The museum recounts that Morse received news that his young wife had died unexpectedly while recovering after giving birth to their third child. This was delivered via a messenger on horseback.

By the time Morse arrived home to New Haven, Connecticut, she had already been buried. The widower’s grief was compounded by the lack of speedy long-distance communication. The museum notes, “he began to think about ways to make the relay of important messages faster.”

Fostering Art Appreciation in America

After his wife’s death, Morse lived and taught in New York. He was elected to the American Academy of the Fine Arts, whose mission was to increase the American public’s appreciation of art. Aspiring artists asked for his tutelage, and he formed a Drawing Association whose pupils included Asher B. Durand and Thomas Cole. Joining forces with his students, they formed the National Academy of the Arts of Design in 1826, which was inspired by the Royal Academy, with Morse as president.

Many 19th-century artists went on to study there, including Winslow Homer and George Inness. Morse was later appointed a professor of painting and sculpture at New York University, the first such professorship in the country.

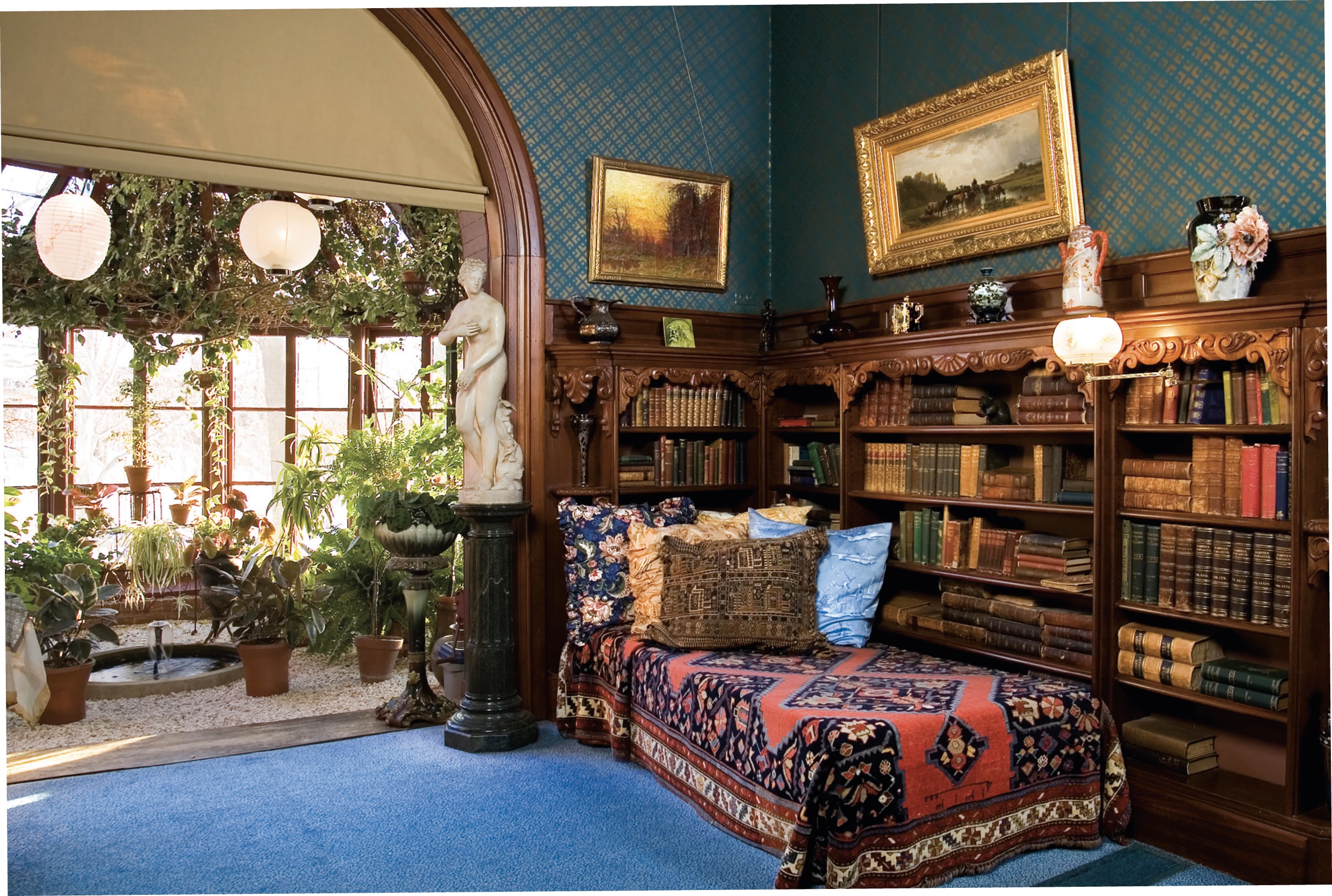

In 1829, Morse went to Paris for a three-year study period. He frequented the Louvre and observed people from different countries and walks of life visiting and experiencing the great works of art. This inspired the quintessential painting of his career, which was also one of his final artworks. “Gallery of the Louvre” is a monumental canvas at 6 feet by 9 feet. He began it in Paris in 1831 and finished the picture in New York in 1833.

Fashioned with the aim of educating Americans about European art, Morse sets the painting in the Louvre’s Salon Carré. Employing artistic license, he gathered together 38 paintings and two antiquities on view in different areas of the museum into one gallery, displaying them in a “Salon hang,” a dense stacking from almost floor to ceiling.

Masterpieces by Leonardo da Vinci, including the “Mona Lisa,” Caravaggio, Rembrandt, Rubens, Titian, Anthony van Dyck, and Veronese are meticulously reproduced in miniature, along with the famous Roman marble statue “Diana of Versailles.” This complex composition continues the 17th-century tradition of a “gallery picture.” Morse’s is the only major example of such a scene in all of American art.

Morse was a close friend of the American author James Fenimore Cooper, famous for “The Last of the Mohicans,” and gave him a cameo in the painting. The writer can be seen in the corner at left with his family.

He placed this painting on exhibition twice, in New York City and New Haven. Although it was commended by critics and art aficionados, the public was indifferent. The artist was demoralized. Coupled with losing out on a federal commission to paint a monumental work for the U.S. Capitol Rotunda, Morse put down his brush permanently and pursued electrical experiments instead.

An Invention Sparks

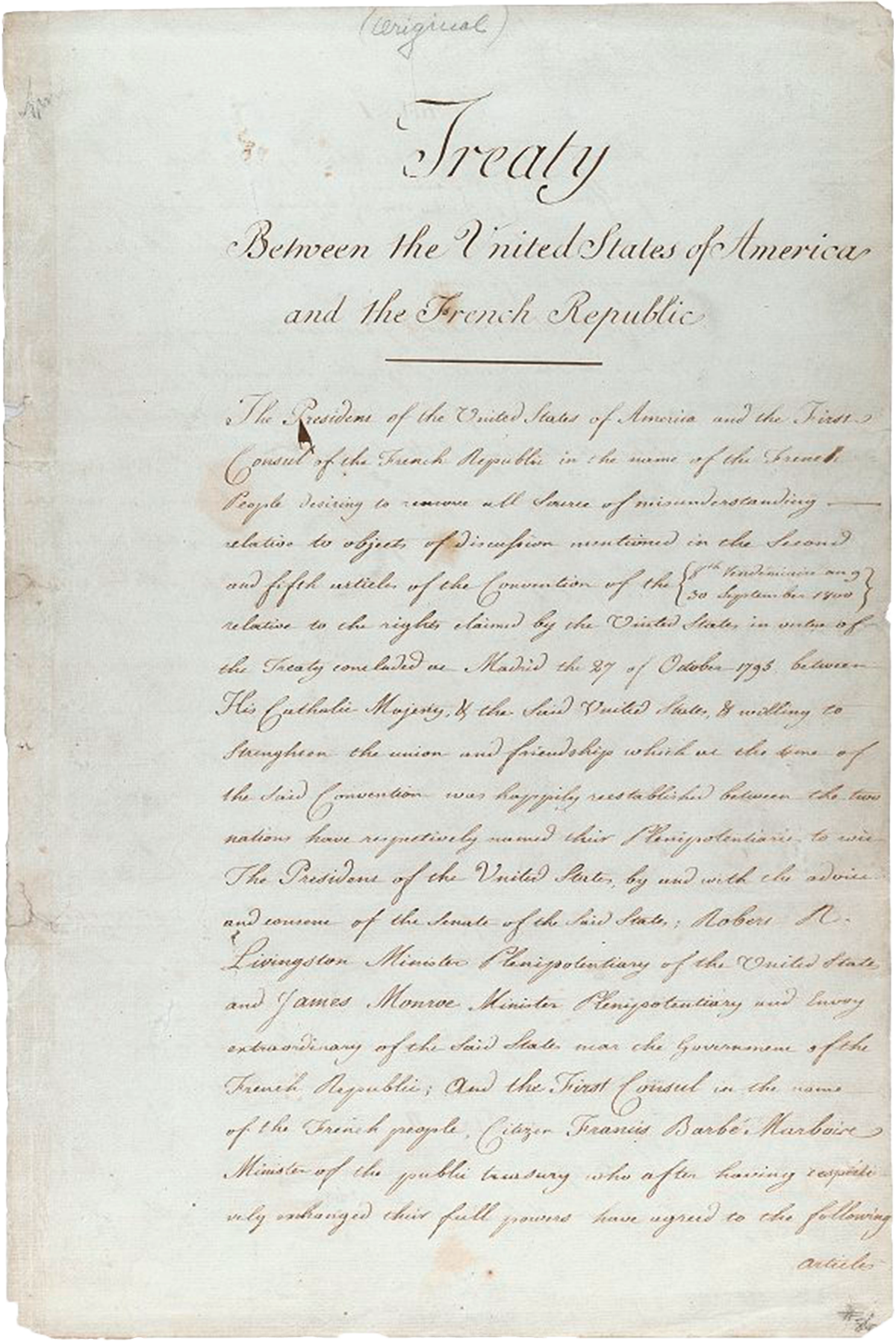

During his transatlantic crossing in 1832 from France to the United States, Morse met Charles Thomas Jackson (1805–1880), who had in-depth discussions with him about electromagnetism and invited him to observe his experiments. This sparked Morse’s idea for developing a means of speedily transmitting long-distance messages over an electrical wire.

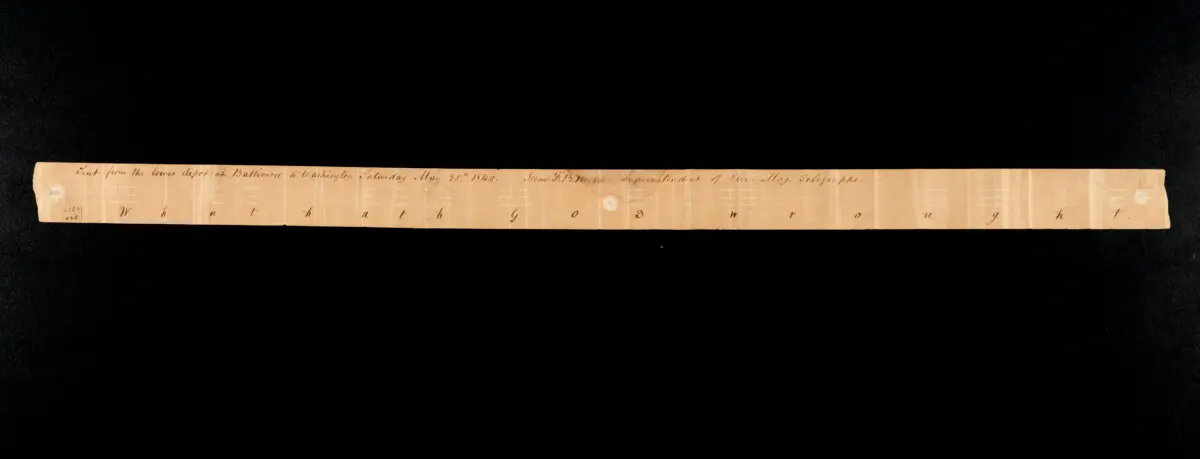

Morse developed a device that could send coded messages through a single wire telegraph. In 1843, the House of Representatives passed a bill authorizing him to construct an experimental wire between the Supreme Court Room in Washington and a Baltimore railway depot 40 miles away. On May 24, 1844, he sent the first telegraph message. His device was more efficient in design than other electric telegraphs.

This, along with the development of Morse Code, a system in which letters are represented by combinations of long and short signals, brought him fame and fortune. Morse’s work transformed long-distance communications technology and is considered the forerunner of today’s email.

From May Issue, Volume VI

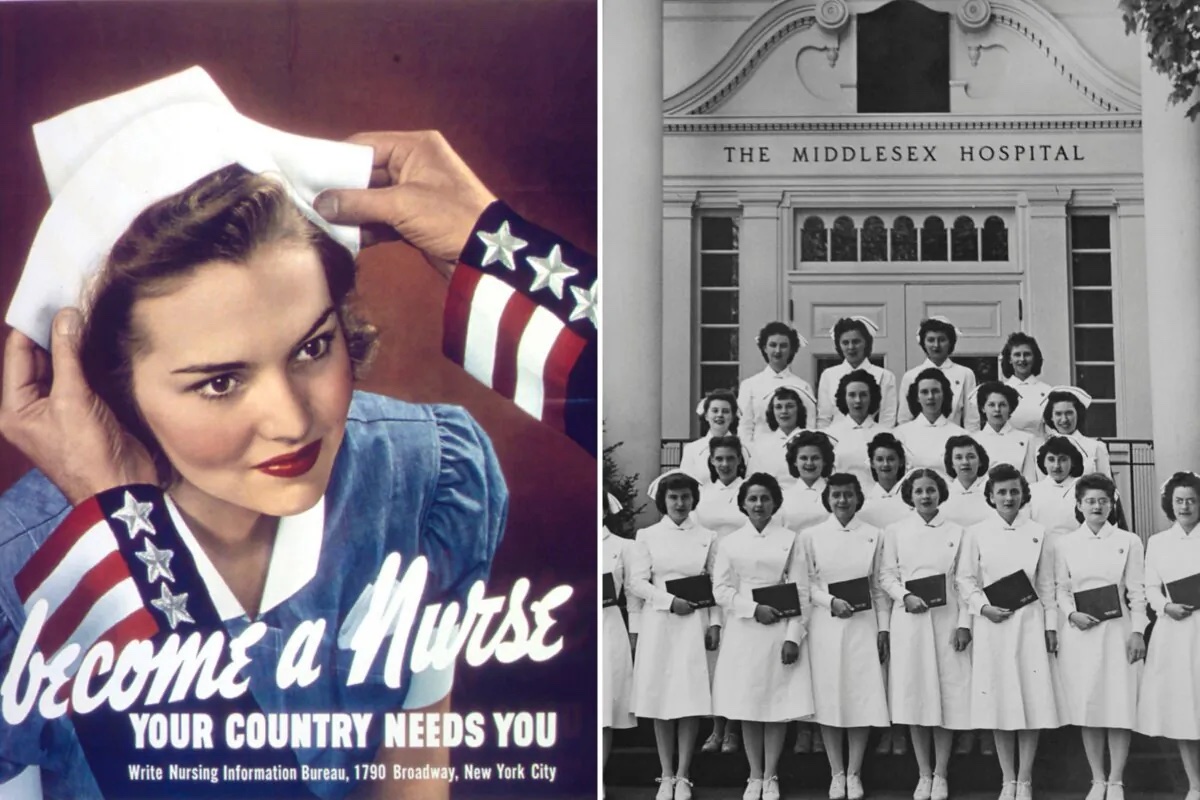

Nurses to the Rescue

Nurses to the Rescue

Nurses around the country faced different challenges beyond the long hours (sometimes having 12-hour workdays), such as scarce supplies, and in the case of cadets at the University of Washington School of Nursing, a polio epidemic that hit Seattle in the 1950s.

Nurses around the country faced different challenges beyond the long hours (sometimes having 12-hour workdays), such as scarce supplies, and in the case of cadets at the University of Washington School of Nursing, a polio epidemic that hit Seattle in the 1950s.